Contents

This is a div block with a Webflow interaction that will be triggered when the heading is in the view.

If you've been anywhere near the marketing world recently, you'll have noticed a pattern. Someone coins a new acronym, agencies build a service page around it, and suddenly every newsletter is telling you that everything you knew about search is wrong.

AEO. GEO. LLMO. AI SEO. The terminology has been flying around since 2024, and the noise hasn't exactly helped anyone make a clear decision.

Here's the thing: the underlying shift is real. People are genuinely changing how they find information. When someone asks ChatGPT whether they should hire an SEO agency in Cambridge, or asks Perplexity to explain the difference between PPC and paid social, those queries aren't hitting Google first. And if your business isn't showing up in those AI-generated answers, you're invisible to a chunk of your potential audience.

But that doesn't mean you need to throw everything out and start from scratch. Far from it.

This post cuts through the hype and gives you a practical picture of what AEO and GEO actually are, what the research says works, and what you should genuinely be doing about it in 2026. No myths. No manufactured urgency. Just the honest version.

Let's Start With the Jargon

Before anything else, it's worth clearing up what these terms actually mean. The confusion is legitimate; the industry hasn't agreed on definitions, and that ambiguity is being used to sell things.

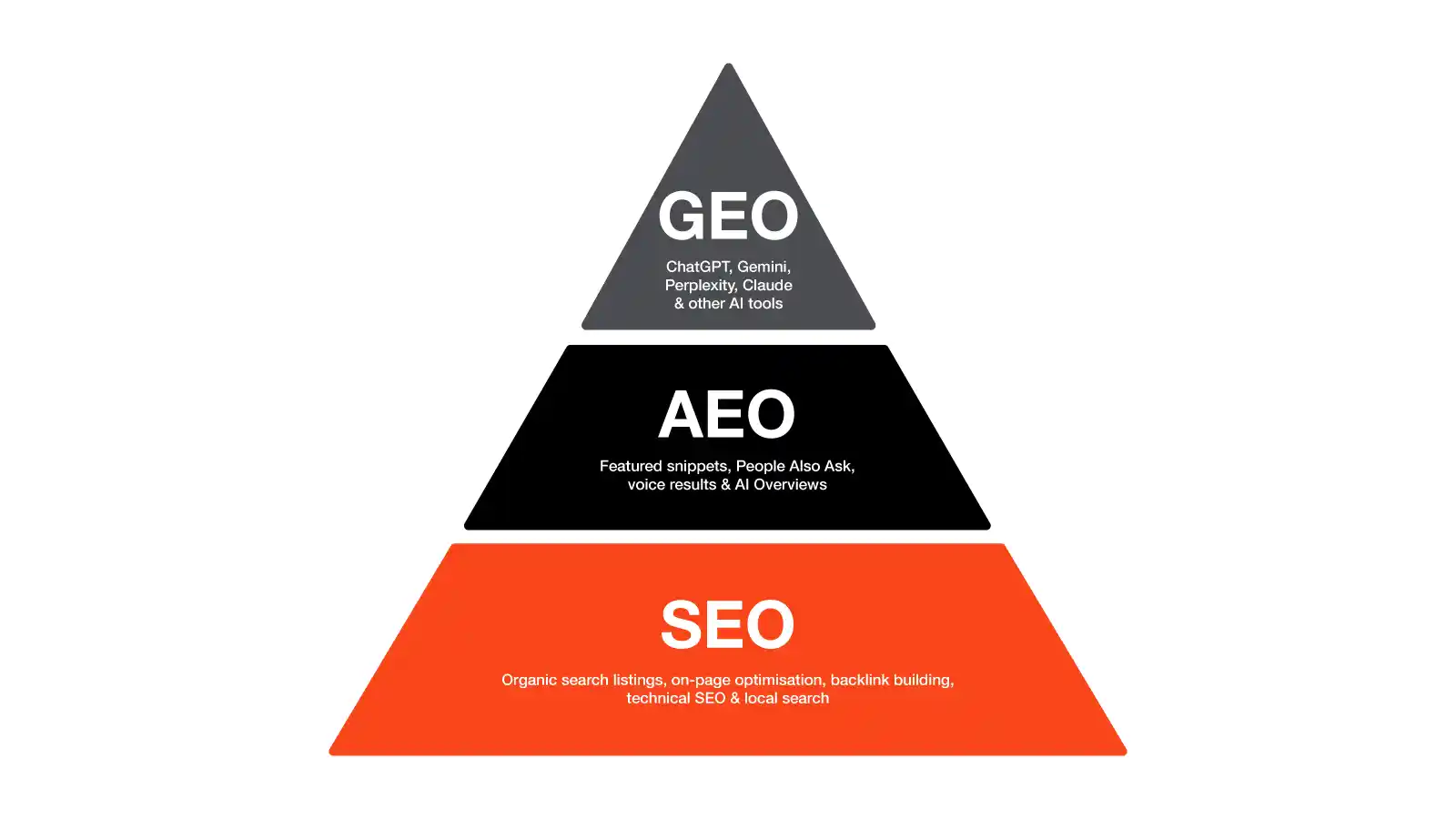

AEO (Answer Engine Optimisation) refers to structuring your content so it gets pulled out and presented as a direct answer inside AI-powered interfaces. Think featured snippets, People Also Ask boxes, voice results, and AI Overviews on Google.

GEO (Generative Engine Optimisation) is the next layer. It's about making sure your brand and content gets cited or summarised inside conversational AI tools; ChatGPT, Gemini, Perplexity, Claude, and others.

LLMO (Large Language Model Optimisation) is essentially the same as GEO but emerged from practitioner communities rather than academia. The two overlap considerably.

AI SEO is an umbrella term that covers all of the above, plus using AI tools to do traditional SEO work faster.

The table below shows how each approach differs in practice:

The Myth That AEO Has Nothing to Do With SEO

Look, this is marketing. New terms emerge, agencies build services around them, and the pitch naturally leans into what's different rather than what's familiar. We're not immune to that ourselves. When a genuine shift happens in the industry, you talk about it, you package it, and you help clients understand why it matters.

And the shift is real. AEO and GEO do represent a change in how search is evolving, and there are specific things worth focusing on that you might not have prioritised with a traditional SEO lens. But the idea that they're entirely separate disciplines, requiring a fresh budget and a completely different strategy, doesn't really hold up when you look at how these systems actually work.

LLMs largely pull from search engines. When ChatGPT or Perplexity need current information, they run a search, usually on Google or Bing, and synthesise what they find. So if you're already doing SEO well, the content you've built, the authority you've earned, and the technical foundations you've put in place are already working in your favour with AI search too.

The consensus from practitioners who've looked at the data is that optimising for LLM citations and optimising for traditional search are, in practice, about 80% the same job. If you're succeeding with SEO, there's a reasonable chance you're already performing better in AI search than you think.

The remaining 20% is where the genuinely useful adjustments live: how you structure content, how you cite sources, how visible you are on third-party platforms. Those things matter, and they're worth addressing deliberately. But they sit on top of good SEO, not instead of it.

Understanding the LLM Landscape

Before optimising for AI search, it helps to understand which platforms actually matter, who's using them, and how each one decides what to cite.

Here's the current picture, based on Similarweb traffic data published in their 2026 AI Brand Visibility Index, AI Multiple's LLM market share tracker, and Recon Analytics' paid subscriber data referenced by Stackmatix:

*Copilot's market share figures vary considerably depending on what you're measuring. Web traffic share sits around 1%, but paid enterprise subscriber share sits around 11.5% per Recon Analytics' January 2026 data. The gap reflects the fact that a large proportion of Copilot usage is now embedded directly inside Microsoft 365 applications rather than happening via a browser. In other words, it doesn't show up in web traffic data, but it's still very much in use.

A few things are worth drawing out from this. First, the AI search market is fragmenting fast. ChatGPT's web traffic share dropped from around 87% in January 2025 to around 64.5% in January 2026, while Gemini grew from roughly 5.7% to 21.5% over the same period, according to Similarweb data referenced in Wallaroo Media's Q1 2026 market share analysis.

Second, different platforms cite different types of sources. Research published by Yext in March 2026, analysing 17.2 million AI citations across industries, found that Gemini favours official brand websites and established sources, Perplexity pulls from a stable mix of official and directory sources, ChatGPT shows more industry-specific variation, and Claude cites user-generated content at two to four times the rate of other models. Microsoft Copilot, pulling from Bing's index, broadly follows similar patterns to ChatGPT in terms of what content it values, with a heavier skew toward structured, authoritative sources that perform well in traditional search.

Third, and worth keeping in perspective: when AI platforms do refer traffic to external websites, that traffic converts well. Similarweb's data shows users referred from ChatGPT convert to transactional sites at around 7%, compared to 5% from Google referrals. Volume is low relative to organic search, but quality is high.

The practical implication of all this: don't optimise for one platform in isolation. The content that gets cited by Perplexity (clear, sourced, specific) also tends to get cited by ChatGPT and Gemini. And building a credible presence across your own site, third-party platforms, and community spaces improves your standing across all of them simultaneously.

What the Research Actually Says

There's one piece of academic research worth anchoring this conversation to, because it's the most rigorous primary evidence we have.

A paper titled GEO: Generative Engine Optimisation, published at the 30th ACM SIGKDD Conference in Barcelona in August 2024 by researchers from IIT Delhi, Princeton University, and the Allen Institute for AI, tested nine content optimisation strategies against a benchmark of 10,000 diverse queries to measure their effect on AI citation rates.

The headline finding: GEO strategies can boost visibility in generative engine responses by up to 40%.

The specific methods the paper tested, and their effects, are below:

The paper is careful not to present exact percentages for every individual method, as the effects vary by domain and query type. The top-line 40% figure refers to the best-performing combination of strategies. Any source quoting precise percentages per method (e.g. "citations = +40%, statistics = +37%") is extrapolating beyond what the paper actually states. It's worth being upfront about that.

What is clear from the research: content that demonstrates genuine expertise, cites its sources, and presents information cleanly performs significantly better in AI search than content that relies on keyword repetition.

Practical Optimisations That Actually Work

Here's what's worth doing. Each of these is grounded in how AI systems actually retrieve and evaluate content, based on the sources we've cited throughout this post.

1. Answer the question directly, then expand

AI systems extract passages, not whole pages. If your answer is buried three paragraphs down, there's a good chance it won't get pulled. Lead with the clearest version of your answer, then back it up beneath it. Think of each section as something that should make sense if lifted out of the page entirely.

2. Cite your sources

Unsourced claims don't get cited. Sourced ones do. This is one of the highest-leverage changes you can make to existing content. Go through your key pages and ask: is every factual claim either common knowledge or attributed to a named, credible source? If not, fix that. The ACM SIGKDD paper makes this point explicitly.

3. Structure headings around how people actually ask questions

Not keyword-stuffed headers, but the kind of question someone would type into ChatGPT. "What is the difference between SEO and PPC?" works better than "SEO vs PPC Benefits." You're trying to match the way a real person phrases a query, because that's what AI systems are responding to.

4. Build your presence on third-party platforms, especially community ones

This is the one most people overlook. Different AI platforms pull from different source types, and community platforms feature heavily across all of them.

Reddit, LinkedIn, and YouTube are among the most-referenced domains across major LLMs. Yext's citation research found that across 17.2 million AI citations, listings and third-party content often outperformed brand websites in citation frequency.

This makes sense when you consider that Claude in particular cites user-generated content at rates two to four times higher than other models.

What this means practically: genuine participation in the places where your audience gathers online matters more than it ever did. Answer questions in relevant subreddits. Publish considered LinkedIn content. Get mentioned in industry roundups. Earn reviews on Trustpilot and Google. Wikipedia is worth a mention too; it sounds dated, but LLMs cite it constantly. If your brand or sector has a Wikipedia presence, make sure it's accurate.

The broader point here is what some practitioners are calling "relevance engineering": being visible and credible across the web, not just on your own site. For most SMEs, even modest, consistent participation in relevant online communities will make a meaningful difference.

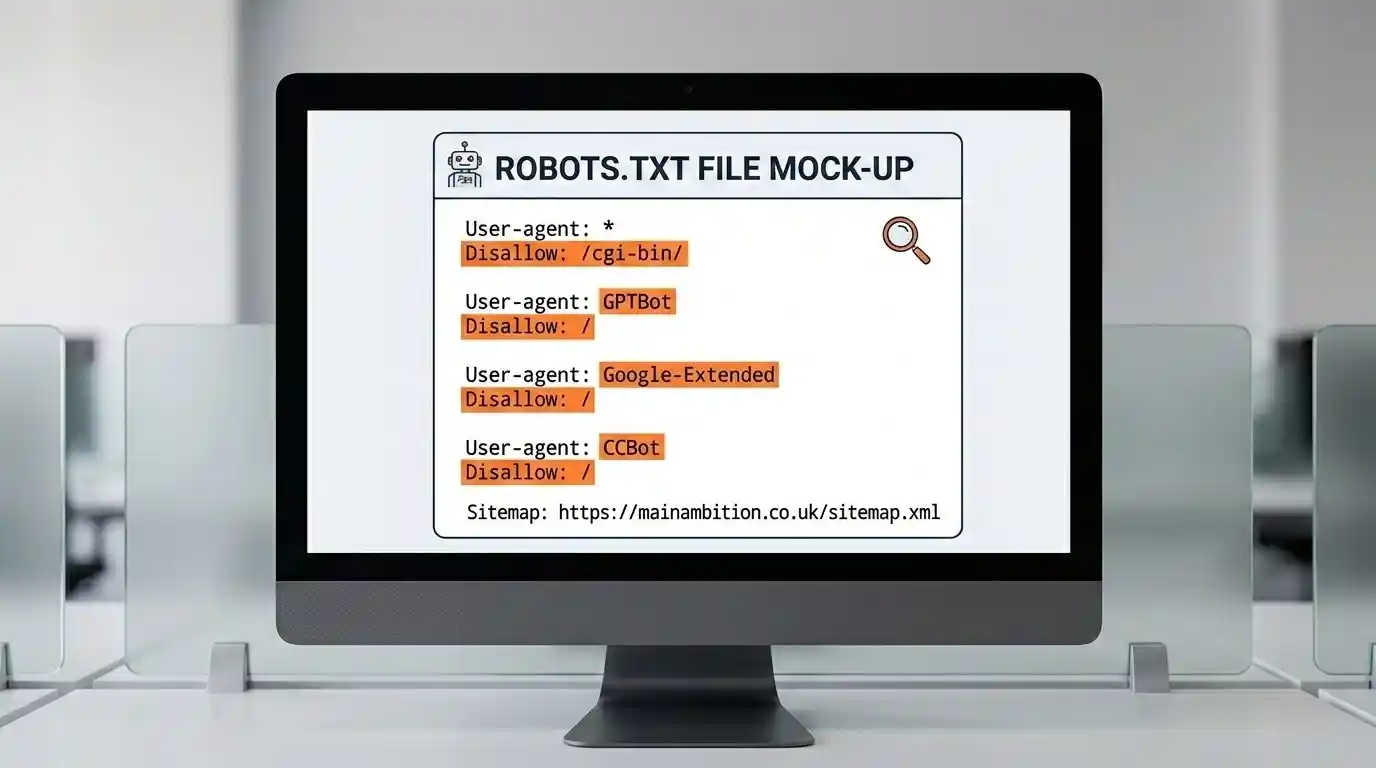

5. Check your robots.txt

If GPTBot, ClaudeBot, PerplexityBot, or Google-Extended are blocked in your robots.txt, those platforms cannot cite your content. It sounds obvious, but a surprising number of sites have these blocked, often through automated technical configurations. Check it.

6. Add schema markup

FAQPage, HowTo, Article, and Organisation schema give AI systems structured context. It's one of the more straightforward technical improvements most sites can make, and the evidence base for its impact on structured data retrieval is solid and well-established.

7. Show when your content was last updated

AI systems have a strong recency bias. Undated content, or content that looks old and untouched, consistently loses out to fresher material. Add a visible "last updated" date to your key pages and maintain it when you refresh the content.

8. Think in entities, not just keywords

Consistent naming of your brand, your services, and your topics helps LLMs build an accurate picture of who you are. If you call your service "SEO" in one place, "search engine optimisation" in another, and "organic search" somewhere else, that creates noise. Pick your language and use it consistently.

9. Write FAQs that reflect real questions

FAQ sections are among the most extractable formats for AI systems. They're clean, self-contained, and designed to answer a single question per block. Build them around the actual questions your clients ask.

10. Monitor whether you're actually being cited

You can't improve what you're not measuring. Pick 20 of your most important queries, run them through ChatGPT, Perplexity, and Google monthly, and log what comes back. Who's being cited? Which pages? Do that consistently and you'll start to see patterns.

Paid tools like Otterly and Peec AI can automate this at scale. For most SMEs, the manual approach is fine.

The Stuff That Doesn't Work

llms.txt files are being positioned as a must-have. The idea is that you create a file telling AI systems how to understand your site. There is currently no published evidence demonstrating that this meaningfully improves citation rates. Worth implementing as a signal, but it's not a lever.

Rewriting content in a robotic "AI-optimised" format, heavy on keyword density, actively hurts performance. The ACM SIGKDD paper is clear on this: keyword stuffing has a measurable negative effect on AI visibility.

Treating AEO as a completely separate budget from your existing SEO typically means doing less of both well. The foundations are shared. Build on what's working.

The Bigger Picture

The honest summary of where we are in 2026 is this: AI search is a real and growing channel, but it hasn't replaced traditional search. The businesses winning in AI-generated answers are, largely, the same businesses that win in traditional search. They just have content that's clearer, better sourced, and more genuinely useful.

The gap between good SEO and good AEO is narrower than most agencies would like you to believe. The meaningful adjustments are worth making, but they're adjustments, not a reinvention.

If you're not sure where your business currently stands in AI search, the best starting point is simply to ask. Open ChatGPT or Perplexity, type in the kind of question your ideal client would ask, and see what comes back. If it's your competitors and not you, that's useful information. And it's a gap we can help close.

Want to know how visible your business is in AI search results? We run AEO visibility audits for businesses across Cambridge, Suffolk, and the East of England. Get in touch and we'll take a look.

Sources

Aggarwal, P. et al. (2024). GEO: Generative Engine Optimisation. ACM SIGKDD Conference on Knowledge Discovery and Data Mining, Barcelona.

https://dl.acm.org/doi/10.1145/3637528.3671900

Full paper (arXiv pre-print):

https://arxiv.org/abs/2311.09735

Similarweb (2026). AI Brand Visibility Index and Generative AI Statistics 2026.

https://www.similarweb.com/blog/marketing/geo/gen-ai-stats/

AI Multiple (2026). LLM Market Share: Compare Usage and Adoption.

https://aimultiple.com/llm-market-share

Wallaroo Media (2026). LLM Traffic and Market Share Q1 2026.

https://wallaroomedia.com/llm-traffic-market-share-q1-2026/

Yext Research (2026). How ChatGPT, Perplexity, Gemini, and Claude Actually Decide What to Cite.

https://yext.com/blog/2026/03/how-chatgpt-perplexity-gemini-claude-decide-what-to-cite

AI Visibility (2026). How AI Search Actually Works: Inside ChatGPT, Claude, Gemini, and Perplexity.

https://www.ai-visibility.org.uk/blog/how-ai-search-works/

Surferstack (2026). Perplexity vs ChatGPT vs Claude vs Gemini: Which AI Search Engine Sends the Most Referral Traffic in 2026.

Recon Analytics / Stackmatix (2026). Microsoft Copilot Adoption Statistics and Trends.

https://www.stackmatix.com/blog/copilot-market-adoption-trends